Pricing AI products when quality is probabilistic, not guaranteed

The fundamental challenge of pricing artificial intelligence products has shifted from the traditional software paradigm where quality was a binary promise—it works or it doesn't—to a probabilistic reality where outcomes exist on a spectrum of likelihood. This transformation represents one of the most significant strategic inflection points in enterprise software pricing history, forcing executives to reconsider decades of established contracting, service-level agreement (SLA) design, and value communication frameworks.

Unlike conventional SaaS products that deliver deterministic results—a CRM system either stores customer data correctly or it fails—AI products operate in a probabilistic domain where the same input can yield different outputs, where accuracy rates replace guarantees, and where "good enough" becomes the new standard of excellence. This fundamental shift creates profound pricing implications that extend far beyond simple cost-plus calculations or competitive benchmarking exercises.

According to research from Vista Equity Partners, while AI models are inherently probabilistic, dealing in likelihoods rather than certainties, successful enterprise AI applications require deeply detailed workflows that bridge this uncertainty with business needs for precision. The tension between probabilistic AI capabilities and deterministic business requirements has created what industry analysts describe as a "pricing paradox"—how do you charge for value when that value itself is uncertain?

The stakes are substantial. OpenAI is projected to lose $14 billion in 2026 while most users pay nothing, highlighting the unsustainable economics of current AI pricing approaches. Meanwhile, 56% of CEOs report no financial returns from AI investments yet, according to PwC's 2026 CEO Survey, creating pressure to develop pricing models that better align costs, value delivery, and risk allocation between vendors and customers.

This deep dive examines the strategic frameworks, emerging models, and practical implementation approaches that leading organizations are deploying to price AI products in an environment where quality is probabilistic rather than guaranteed. Drawing on recent enterprise implementations, vendor strategies from OpenAI, Anthropic, Google, and Microsoft, and expert insights from pricing strategists, we'll explore how to construct pricing architectures that acknowledge uncertainty while still capturing value and building sustainable businesses.

Why Traditional Pricing Models Fail for Probabilistic AI Products

The traditional software pricing playbook assumes a fundamental premise: the product delivers consistent, repeatable outcomes. A payroll system calculates wages correctly every time. An email platform delivers messages reliably. A database stores and retrieves information with near-perfect accuracy. These deterministic systems enabled straightforward pricing models—per-seat subscriptions, tiered feature access, or simple usage-based charges—because the value delivered was predictable and measurable.

Probabilistic AI shatters this foundation. A customer service chatbot might resolve 85% of inquiries correctly but fail on the remaining 15%. A pricing optimization engine might improve margins by 4.79% on average, as reported by organizations implementing AI-driven pricing intelligence, but performance varies significantly across product categories, customer segments, and market conditions. A document analysis tool might extract contract terms with 92% accuracy—impressive by AI standards, yet potentially catastrophic if that 8% error rate affects critical legal obligations.

This probabilistic nature creates multiple pricing challenges that traditional models cannot address effectively. First, the value delivered varies by customer and use case in ways that simple tiering cannot capture. According to research from Bessemer Venture Partners, AI pricing strategy differs fundamentally from SaaS because it must price for outcomes rather than access. A legal AI tool that achieves 95% accuracy might deliver immense value to a law firm handling routine contract reviews but prove inadequate for merger negotiations where even 99% accuracy leaves unacceptable risk.

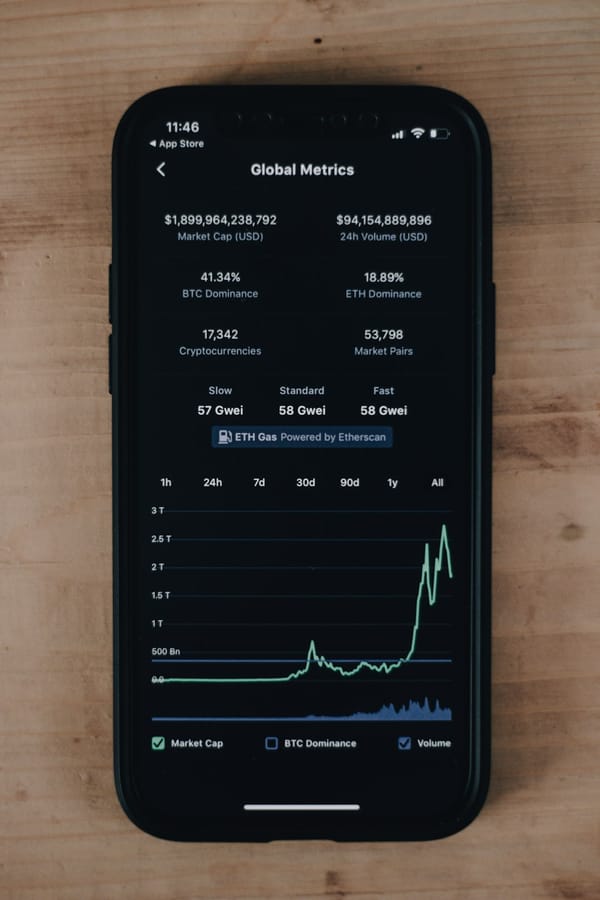

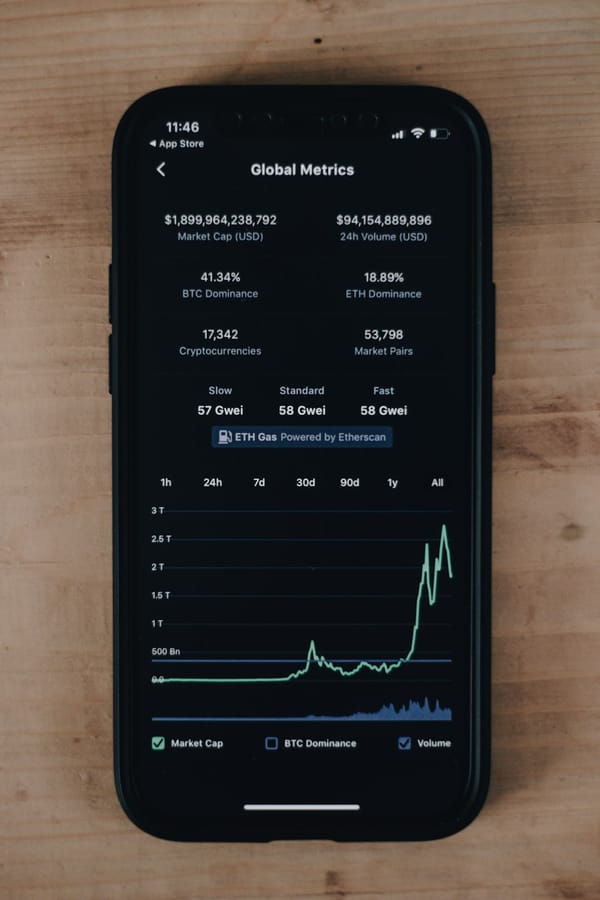

Second, costs are variable and unpredictable in ways that challenge traditional SaaS economics. Unlike conventional software with near-zero marginal costs, AI incurs real per-inference expenses that fluctuate based on model complexity, input length, and computational requirements. OpenAI's token-based pricing—$1.25 per million input tokens and $10 per million output tokens for GPT-5—reflects this reality, but it creates budget uncertainty for customers who cannot predict their consumption patterns. Research shows that 36% of IT leaders report AI overspend, while 70% of AI providers face delivery cost overruns, creating tension on both sides of the transaction.

Third, quality metrics are complex and contextual. Traditional SaaS could measure uptime, response time, and error rates with precision. AI products require nuanced evaluation frameworks that consider accuracy, precision, recall, F1 scores, confidence intervals, and domain-specific performance metrics. More importantly, "good" performance is highly contextual—90% accuracy in medical diagnosis carries vastly different implications than 90% accuracy in product recommendations.

The enterprise contract structure itself becomes problematic. Standard SLAs promise specific uptime percentages (99.9%) and performance guarantees with financial penalties for non-compliance. But how do you write an SLA for a probabilistic system? Guaranteeing "85% accuracy on average" sounds reasonable until customers experience the 15% failure rate on their most critical use cases. As one pricing expert noted in research on monetizing AI amid executive pressure and customer uncertainty, both sellers and buyers struggle with defining outcomes (47% of buyers), cost predictability (36%), and value attribution (25%).

According to research from BCG on rethinking B2B software pricing in the agentic AI era, buyers struggle with unknown usage patterns, compute cost fluctuations, and soft ROI metrics, risking what analysts call "renewal cliffs" in 2026 as initial AI hype fades and customers demand proven value. This creates a fundamental mismatch: vendors need pricing that covers variable costs and rewards innovation, while customers need predictability and guaranteed value that probabilistic systems cannot inherently provide.

The failure of traditional models has driven experimentation with alternative approaches, but as we'll explore, each carries its own challenges and trade-offs in the probabilistic quality environment.

The Spectrum of Probabilistic Quality: Understanding What You're Actually Pricing

Before constructing a pricing model for probabilistic AI, executives must develop a sophisticated understanding of what "probabilistic quality" actually means in their specific context. This is not a simple binary of "works/doesn't work" but rather a multi-dimensional spectrum that varies across several critical axes.

Accuracy Distribution and Variance represents the first dimension. A customer service AI might average 85% successful resolution, but this masks critical variation. Performance might range from 95% on simple password resets to 60% on complex billing disputes. The distribution matters enormously for pricing because customers experience the variance, not just the average. Research on deterministic versus probabilistic AI from Elementum AI highlights that enterprise leaders need both types of intelligence—deterministic for compliance and critical processes, probabilistic for recommendations and optimization—because the variance in probabilistic outputs creates risk that must be managed.

Consider the implications: if you price based on average performance (85% accuracy), customers experiencing the lower end of the distribution (60% on their critical use cases) will perceive the product as overpriced and underperforming. Conversely, those experiencing the high end (95%) might accept higher prices. This suggests that pricing must somehow account for performance variance, perhaps through tiered offerings or outcome-based adjustments.

Confidence Intervals and Uncertainty Quantification add another layer of complexity. Advanced AI systems can provide not just predictions but confidence scores—"I'm 92% confident this diagnosis is correct" versus "I'm 68% confident." This meta-information about uncertainty has significant value implications. A system that knows when it doesn't know enables human-in-the-loop workflows where uncertain cases get escalated, dramatically improving overall outcomes.

The pricing question becomes: how do you charge for this uncertainty quantification capability? Is a system that provides confidence scores worth more than one that doesn't, even if average accuracy is similar? Research on AI probabilistic programming costs suggests that uncertainty quantification capabilities command premium pricing in enterprise contexts because they enable risk management and compliance workflows. Organizations implementing probabilistic programming frameworks report that the ability to quantify uncertainty reduces implementation risks and accelerates enterprise adoption, justifying higher price points.

Error Type Distribution matters profoundly in many domains. A fraud detection system might have 90% overall accuracy, but the distribution of false positives versus false negatives creates vastly different business impacts. False positives (flagging legitimate transactions as fraud) create customer friction and lost revenue. False negatives (missing actual fraud) create direct financial losses and regulatory exposure. The same 90% accuracy with different error distributions delivers fundamentally different value.

Sophisticated AI pricing must account for these error characteristics. A fraud system optimized to minimize false negatives (catching more fraud at the cost of more false alarms) might justify higher pricing for financial institutions with high fraud exposure. The same system tuned differently might command lower prices for low-risk merchants who prioritize customer experience over fraud prevention.

Consistency and Reproducibility represent another critical dimension often overlooked in AI pricing discussions. Some probabilistic systems produce similar outputs given similar inputs (high consistency despite being probabilistic), while others vary significantly across runs. For many enterprise applications, consistency matters as much as average accuracy. A content moderation system that flags the same content differently on different days creates operational chaos even if average accuracy is high.

Research from Gaine on probabilistic and deterministic results in AI systems notes that 69% of IT decision-makers in 2023 prioritized incorporating AI/ML into business operations, up 15 points from 2022, but many implementations struggled with the tension between probabilistic AI outputs and deterministic business process requirements. The consistency dimension helps bridge this gap—more consistent probabilistic systems command higher prices because they integrate more smoothly into deterministic workflows.

Degradation Patterns Over Time add temporal complexity. AI models often experience performance decay as data distributions shift, new edge cases emerge, or adversarial inputs evolve. A spam filter that starts at 95% accuracy might degrade to 85% over six months without retraining. The rate and pattern of this degradation significantly impact value delivery and operational costs.

Pricing models must account for this temporal dimension. Should prices decrease as model performance degrades? Should retraining be included in base pricing or charged separately? According to research on AI development costs and pricing structure, only a fraction of AI projects have scaled to production partly because costs and pricing models are unclear, making budgeting seem risky. The degradation pattern contributes to this uncertainty—customers cannot predict long-term performance, making fixed pricing feel risky for buyers and potentially unprofitable for vendors.

Context Dependency and Domain Specificity represent perhaps the most challenging dimension. The same AI model might perform at 90% accuracy in one industry vertical and 70% in another due to differences in data quality, use case complexity, or domain-specific requirements. A document extraction tool trained on legal contracts might excel at extracting terms from standardized agreements but struggle with custom partnership documents.

This context dependency suggests that one-size-fits-all pricing fails for probabilistic AI. The value delivered—and thus the defensible price—varies dramatically based on the customer's specific context. Research from Anderssen Horowitz on using generative AI to unlock probabilistic products notes that entire categories of generative media are uniquely enabled by non-deterministic platforms, but the value varies enormously based on how well the probabilistic outputs match specific use case requirements.

Understanding this spectrum of probabilistic quality enables more sophisticated pricing architectures. Rather than treating "probabilistic quality" as a monolithic challenge, executives can decompose it into specific dimensions—accuracy distribution, confidence quantification, error patterns, consistency, degradation, and context dependency—and design pricing mechanisms that address each dimension appropriately. This multi-dimensional approach forms the foundation for the advanced pricing models we'll explore next.

Emerging Pricing Models for Probabilistic AI: A Strategic Framework

The pricing innovation happening across the AI industry represents a fundamental rethinking of how to capture value in probabilistic environments. While no single model has emerged as dominant, several strategic approaches have gained traction, each addressing different aspects of the probabilistic quality challenge.

Usage-Based Pricing with Quality Tiers

The most prevalent model among major AI vendors is token-based usage pricing, where customers pay per unit of consumption (tokens, API calls, compute time) regardless of output quality. OpenAI charges $1.25 per million input tokens and $10 per million output tokens for GPT-5, while Anthropic's Claude Opus 4.6 costs $5 per million input tokens and $25 per million output tokens. Google's Gemini 2.5 Pro sits at $1.25 input and $10 output per million tokens.

This model elegantly handles cost variability—higher consumption generates higher revenue to cover inference costs. However, it fails to address quality uncertainty from the customer perspective. A customer paying for tokens receives no guarantee about output quality, creating the risk of paying for unusable results. As research from Impact Pricing notes, "AI has made pricing complicated again. In SaaS, sellers could rely on predictable usage and stable costs. With AI, both sides are uncertain."

The innovation emerging within usage-based pricing is quality-tiered models where customers select from multiple model tiers with different accuracy/cost trade-offs. OpenAI offers GPT-4o mini at $0.15/$0.60 per million tokens for basic tasks and GPT-5 at $1.25/$10 for complex reasoning. Anthropic provides Haiku 3.5 ($0.80/$4) for speed and Opus 4.6 ($5/$25) for capability. Google offers Gemini 2.0 Flash Lite ($0.08/$0.30) through Gemini 2.5 Pro ($1.25/$10).

This tiering allows customers to optimize cost versus quality based on use case criticality. Simple tasks use cheap, lower-accuracy models; critical tasks use expensive, higher-accuracy models. Research from LLM Gateway comparing OpenAI, Anthropic, and Google costs notes that mid-tier models often provide optimal quality-cost balance for production workloads, suggesting that sophisticated customers are learning to match model tiers to use case requirements.

The strategic limitation of pure usage-based pricing, even with quality tiers, is that it shifts all quality risk to customers. They pay regardless of whether outputs meet their needs, creating pricing friction and limiting adoption for risk-averse enterprises.

Outcome-Based Pricing with Probabilistic Adjustments

At the opposite end of the spectrum, outcome-based pricing attempts to charge based on results delivered rather than resources consumed. Instead of charging per API call, vendors charge per successful customer service resolution, per accurate document extraction, or per qualified lead generated. This model theoretically aligns perfectly with probabilistic quality—customers pay only for outcomes that deliver value.

According to research from Monetizely's 2026 Guide to SaaS, AI, and Agentic Pricing Models, there's significant uncertainty around how to measure outcomes fairly, how to contract for them, and how to account for factors outside the AI's control. Only approximately 17% of enterprise SaaS vendors used true outcome pricing by 2022 due to measurement inconsistencies, disputes over attribution, and external factors.

The challenges multiply in probabilistic AI contexts. First, outcome definition and measurement become contentious. What constitutes a "successful" customer service resolution? If the AI provides an answer but the customer remains unsatisfied, did the outcome occur? Different stakeholders may have different definitions, creating contractual disputes.

Second, attribution problems plague outcome-based models. If an AI pricing optimization tool suggests a price increase and revenue grows, was the growth due to the AI, market conditions, seasonal factors, or other business initiatives? The probabilistic nature of AI makes clean attribution nearly impossible, creating ongoing disputes about whether outcomes should trigger payment.

Third, external dependencies affect outcomes in ways beyond the AI's control. A lead generation AI might identify perfect prospects, but if the sales team fails to convert them, should the vendor still be paid? These dependencies create risk for vendors who cannot control the full outcome chain.

Despite these challenges, outcome-based pricing is gaining traction in specific contexts where outcomes are clearly measurable and attribution is relatively clean. Organizations implementing AI-driven pricing intelligence report revenue lifts of up to 4.79% and quote turnaround time improvements, according to research on enterprise AI implementation strategy. When these outcomes can be measured reliably, outcome-based pricing becomes viable.

The emerging innovation is probabilistic outcome contracts that incorporate quality variance into pricing structures. Instead of guaranteeing specific outcomes, vendors commit to outcome distributions—"we'll achieve 85% accuracy on average with 90% confidence intervals between 80-90%." Pricing adjusts based on actual performance relative to these probabilistic commitments, creating shared risk between vendor and customer.

Hybrid Models: Combining Base Subscriptions with Usage and Outcome Components

The most sophisticated pricing architectures emerging in the market are hybrid models that combine multiple pricing mechanisms to address different aspects of the probabilistic quality challenge. According to research from Revenera on AI pricing strategy, 31% of AI vendors now use hybrid models mixing subscriptions, usage-based charges, and value-based components.

A typical hybrid structure might include:

- Base subscription covering platform access, basic features, and a usage allowance

- Usage-based charges for consumption beyond the base allowance

- Quality tier premiums for access to higher-accuracy models

- Outcome-based bonuses or penalties that adjust pricing based on measured results

This multi-component approach addresses different stakeholder needs. The base subscription provides revenue predictability for vendors and cost predictability for customers. Usage-based charges align costs with consumption and value. Quality tiers allow customers to optimize accuracy versus cost. Outcome components create incentives for vendor performance improvement.

Research from Hickam's Dictum on why per-seat pricing is dying in 2026 notes that hybrid models balance predictability with flexibility, addressing both vendor needs for sustainable economics and customer needs for cost control and value alignment. The article suggests that pure per-seat models are fading in favor of hybrids that better reflect AI's variable costs and probabilistic value delivery.

The strategic design challenge in hybrid models is component weighting—how much of total revenue comes from each component? A model weighted heavily toward base subscriptions provides stability but may not align well with value delivery. A model weighted toward usage creates better cost alignment but higher budget uncertainty. A model weighted toward outcomes creates strong performance incentives but measurement complexity.

Leading vendors are experimenting with different weightings for different customer segments. Enterprise customers with predictable workloads might prefer subscription-heavy models with usage caps. Mid-market customers