How to price AI products with audit trails and model logs

The artificial intelligence market has entered a critical maturity phase where governance, compliance, and transparency are no longer optional features—they're fundamental requirements that directly impact pricing strategy. As enterprises deploy AI systems that make consequential decisions affecting customers, employees, and business outcomes, the ability to track, audit, and explain those decisions has become a competitive differentiator and a regulatory necessity. According to research from Sparkco, companies with comprehensive audit trail capabilities saw 30% fewer compliance issues within their first year of implementation, while those without proper logging face mounting scrutiny from regulators and customers alike.

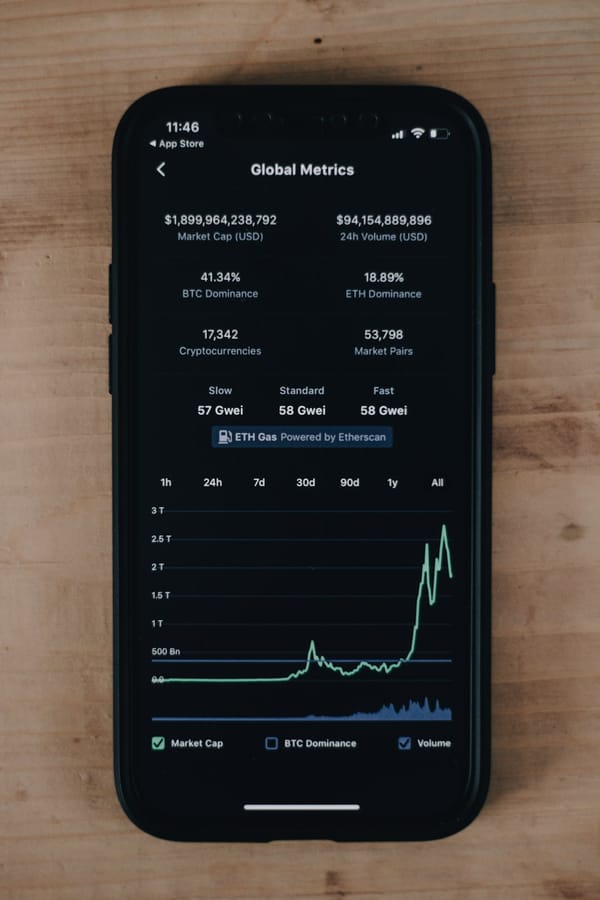

The pricing challenge for AI products with audit trails and model logs extends far beyond simple feature packaging. These capabilities represent substantial infrastructure investments—including storage costs that can multiply by 3-5x in regulated industries, computational overhead of 15-35% for real-time logging, and compliance requirements that vary dramatically across jurisdictions. Yet despite these costs, market data from 2025 shows that only 48% of organizations monitor production AI systems adequately, creating both a market opportunity and a strategic imperative for vendors who can price governance features appropriately.

This deep dive explores the multifaceted landscape of pricing AI products with audit trails and model logs, examining how leading providers structure their offerings, what enterprises actually pay for governance capabilities, and how to build pricing models that reflect both the technical costs and strategic value of compliance features. We'll analyze real-world implementations, dissect the economics of audit infrastructure, and provide actionable frameworks for pricing decisions that balance profitability with market accessibility.

Why Audit Trails and Model Logs Command Premium Pricing

The fundamental value proposition of audit trails and model logs stems from their ability to transform AI systems from black boxes into accountable, explainable tools that meet enterprise standards for governance and risk management. This transformation addresses critical business needs that executives are increasingly willing to pay for, particularly as regulatory frameworks like the EU AI Act, GDPR, and industry-specific requirements mandate comprehensive tracking of AI decision-making processes.

Enterprise spending on generative AI reached $37 billion in 2025, up 3.2x from $11.5 billion in 2024, according to Menlo Ventures research. Within this spending surge, governance features represent a growing proportion of budgets as organizations recognize that ungoverned AI implementations carry substantial risk. Companies like Grant Thornton achieved 30% reductions in audit duration by implementing AI-driven analytics with traceable logs of high-risk transactions, demonstrating quantifiable ROI that justifies premium pricing for governance capabilities.

The technical infrastructure required to support comprehensive audit trails creates genuine cost pressures that must be reflected in pricing models. Storage requirements for detailed interaction logs, model version histories, and compliance records can add 30-40% to total AI infrastructure costs, with data preparation and logging consuming significant GPU time at approximately $3 per hour for NVIDIA A100 instances. Network egress fees for log retrieval range from $0.08-$0.12 per GB, while retention policies driven by regulatory requirements (such as GDPR's accountability provisions or GxP's 21 CFR Part 11 standards) mandate long-term storage that compounds costs over time.

Beyond infrastructure, the computational overhead of governance features adds measurable latency and resource consumption to AI operations. Real-time logging of model inputs, outputs, intermediate states, and decision pathways can increase token consumption and inference costs by 10-15%, while integration with compliance frameworks requires additional API modifications, error handling, and validation testing that add 15-35% to base compute expenses. These aren't trivial add-ons—they're fundamental architectural requirements that change the economics of AI delivery.

The market segmentation between enterprises and SMBs reveals stark differences in willingness to pay for governance features. Enterprise organizations allocate $1,240 per employee annually for AI initiatives and operate with technology budgets measured in millions, enabling them to prioritize comprehensive governance as an operational necessity. In contrast, SMBs average $18,000 annually on AI tools and cite cost as the top adoption barrier, yet 68% use AI while 72% fail due to lack of basic governance like logs and PII protection. This creates a pricing challenge: governance features must be accessible enough for SMB adoption while capturing enterprise willingness to pay premium prices for customization, integration, and advanced capabilities.

Regulatory compliance requirements further elevate the strategic value of audit trails. GDPR mandates detailed logging of AI interactions to support accountability in automated decision-making, with potential fines reaching 4% of global annual revenue for non-compliance. SOC 2 Type II certification requires comprehensive audit logging for AI system changes and access controls, with enterprises increasingly demanding zero data retention policies from vendors. Industry-specific regulations add layers of complexity: GxP requirements for pharmaceutical and healthcare AI demand secure, time-stamped logs for every data point under 21 CFR Part 11 and EU GMP Annex 11, while financial services must maintain explainable AI audit trails under SEC record-keeping rules and FCRA provisions.

The competitive landscape shows that companies demonstrating SOC 2/ISO compliance achieve 34% higher revenue growth than non-compliant peers, enabling premium pricing for audit-ready features. This creates a market dynamic where governance capabilities serve dual purposes: they're both cost centers requiring significant infrastructure investment and value drivers that differentiate offerings and justify higher price points. Understanding this duality is essential for developing pricing strategies that balance cost recovery with competitive positioning.

How Leading AI Platforms Price Governance Features

The major cloud AI platforms—AWS Bedrock, Microsoft Azure AI, Google Vertex AI, Anthropic, and OpenAI—have adopted varied approaches to pricing audit trails and governance features, reflecting different strategic priorities and target market segments. While comprehensive public documentation of governance-specific pricing remains limited, analysis of their tier structures and enterprise offerings reveals distinct patterns in how these providers monetize compliance capabilities.

OpenAI's pricing model centers on token-based consumption, with GPT-4o priced at $0.005-$0.01 per 1,000 input tokens and GPT-3.5 under $0.002 per 1,000 tokens. However, governance features like detailed logging, audit trails, and compliance reporting are primarily packaged within enterprise agreements rather than exposed as line-item charges. OpenAI's ChatGPT Enterprise tier, which costs organizations $25,000-$300,000 monthly depending on scale, includes enhanced security controls, admin analytics, and data governance features that aren't available in standard API access. This bundling approach positions governance as an enterprise-only capability, creating clear market segmentation while avoiding the complexity of metered pricing for compliance features.

Microsoft Azure AI leverages its enterprise heritage to offer governance capabilities integrated across its AI services. Azure OpenAI Service pricing follows a hybrid model combining token-based charges ($0.0015-$0.03 per 1,000 tokens for GPT-4 Turbo) with provisioned throughput units (PTUs) that guarantee capacity and performance. Governance features—including Azure Monitor integration, Azure Policy compliance tracking, and audit logging through Azure Activity Logs—are included within Azure's broader security and compliance framework rather than priced separately for AI workloads. This approach reduces friction for enterprises already invested in the Azure ecosystem but makes it difficult to isolate the specific cost of AI governance capabilities. Enterprise customers typically negotiate volume discounts and unified billing across Azure services, with governance features serving as value-adds that strengthen platform lock-in rather than standalone revenue drivers.

Google Vertex AI employs modular, usage-based pricing with separate charges for training, tuning, and inference, billing for token usage, compute resources (CPU, GPU), and endpoint uptime. According to comparative analysis, Vertex AI costs approximately £120 per 50,000 queries for Gemini 1.5 Pro, significantly lower than AWS Bedrock's £360 for Claude 3.5 or Azure AI Studio's £450 for GPT-4 Turbo. Google's governance capabilities—including Vertex AI Model Monitoring for drift detection, Vertex AI Explainable AI for interpretability, and integration with Google Cloud's audit logging—are priced as add-on services with consumption-based fees. This unbundled approach provides transparency but can lead to complexity as governance costs scale with usage, potentially creating bill shock for enterprises with extensive logging requirements.

AWS Bedrock offers Claude 3.5 Sonnet at $0.003 per 1,000 input tokens and $0.015 per 1,000 output tokens, with provisioned throughput options and reserved instances for cost optimization. Bedrock's governance features leverage AWS's comprehensive compliance infrastructure, including CloudTrail for API logging, CloudWatch for monitoring, and AWS Config for configuration tracking. Like Azure, AWS bundles governance capabilities within its broader platform rather than pricing them separately for AI workloads. This creates cost efficiencies for enterprises with existing AWS investments but obscures the true cost of AI-specific governance. AWS's enterprise customers typically operate under Enterprise Discount Programs (EDPs) that provide volume-based pricing across all services, making it difficult to isolate governance-related expenses.

Anthropic's Claude API pricing follows token-based models with enterprise contracts that include enhanced governance features. While specific governance pricing isn't publicly detailed, enterprise implementations range from $20,000-$250,000 monthly, suggesting that audit trails, compliance reporting, and advanced logging are packaged within premium tiers rather than sold à la carte. Anthropic's emphasis on AI safety and constitutional AI positions governance as a core product differentiator, potentially justifying higher price points for enterprises prioritizing responsible AI deployment.

The pattern across these platforms reveals a strategic preference for bundling governance features within enterprise tiers rather than exposing them as discrete, metered services. This approach simplifies sales conversations, reduces billing complexity, and positions governance as a value-add that justifies premium pricing for higher-tier packages. However, it also creates opacity around the true cost of compliance capabilities and makes it difficult for customers to optimize governance spending or compare offerings across vendors.

For AI product companies developing their own pricing strategies, this landscape suggests several considerations. First, the bundled approach used by major platforms may not be optimal for specialized governance tools or AI products where compliance is a primary value driver. Second, the lack of transparent governance pricing creates an opportunity for differentiation through clear, consumption-based pricing that aligns costs with actual usage. Third, the wide variance in total costs (£120-£450 per 50,000 queries) indicates that governance features can significantly impact competitive positioning, particularly for price-sensitive segments.

The True Cost Structure of Audit Trail Infrastructure

Understanding the economics of audit trail and model logging infrastructure is essential for developing pricing models that ensure profitability while remaining competitive. The cost structure extends far beyond simple storage fees, encompassing computational overhead, data processing, retention management, and compliance-specific requirements that vary dramatically across industries and regulatory environments.

Storage costs represent the most visible component of audit trail infrastructure, but they're often underestimated by 30-50% when teams fail to account for the full lifecycle of logged data. Cloud storage pricing varies by tier and access frequency, with frequent retrieval storage costing significantly more than infrequent access tiers. For AI audit trails that require rapid access for compliance investigations or real-time monitoring, organizations typically must provision higher-tier storage that costs $0.023-$0.043 per GB monthly for standard storage in major cloud providers. Network egress fees compound these costs, ranging from $0.08-$0.12 per GB when logs are retrieved for analysis or exported to external systems.

According to research on AI infrastructure costs, misconfigured storage affects up to 90% of teams, leading to unnecessary expenses from overprovisioned capacity, inappropriate storage tiers, or failure to implement lifecycle policies that move older logs to cheaper archival storage. For a medium-sized AI deployment generating 1TB of audit logs monthly, storage costs alone can reach $460-$1,290 annually at standard rates, before accounting for retrieval, processing, or long-term retention requirements.

Computational overhead for governance features adds 15-35% to base AI compute costs through several mechanisms. Real-time logging requires additional processing to capture model inputs, outputs, intermediate states, and metadata for each inference request. This increases token consumption for language models and adds latency to inference pipelines, potentially requiring additional compute capacity to maintain performance SLAs. For GPU-intensive workloads, logging overhead consumes GPU cycles that could otherwise be used for inference, effectively reducing throughput and increasing the cost per inference.

Integration and testing for governance features represent often-overlooked cost drivers. Implementing comprehensive audit trails requires API modifications, error handling logic, and validation testing that consumes 10-15% of development budgets—typically 2x higher than initial estimates. For AI audit service operations, fixed costs include payroll for compliance specialists ($43,000 monthly for 35 FTEs in one analyzed model), cloud compute infrastructure that scales with audit volume, and tools/licenses that can reach 40% of revenue for specialized compliance platforms.

Retention policies dramatically impact long-term infrastructure costs. Regulatory requirements often mandate multi-year retention periods: GDPR's accountability provisions suggest retaining logs for as long as personal data is processed plus statute of limitations periods (typically 3-6 years), while financial services regulations may require 7-10 year retention for audit trails related to customer transactions. GxP requirements in pharmaceutical and healthcare contexts demand retention throughout a product's lifecycle plus additional years for regulatory access.

For an AI system generating 1TB of logs monthly, a 5-year retention policy creates 60TB of cumulative storage, with costs reaching $27,600-$77,400 over the retention period at standard rates (before accounting for archival tier migration that could reduce costs by 50-70%). These long-term costs must be factored into pricing models, either through upfront pricing that amortizes retention costs or through ongoing storage fees that reflect actual consumption.

Industry-specific compliance requirements can multiply infrastructure costs by 3-5x compared to standard implementations. FinTech applications requiring detailed per-interaction logging with compliance context add substantial storage on top of inference costs. HealthTech data residency mandates often require on-premise or sovereign cloud infrastructure that costs 3-5x more than standard multi-tenant API pricing. These multipliers create significant pricing challenges: vendors must either absorb these costs (reducing margins for regulated industry customers) or implement industry-specific pricing tiers that reflect true infrastructure costs.

The hidden costs of governance infrastructure extend to operational overhead. Managing audit trail systems requires specialized expertise in compliance frameworks, data lifecycle management, and security controls. Organizations must implement access controls for audit logs (to prevent tampering while enabling legitimate access), backup and disaster recovery systems (to ensure audit trail continuity), and monitoring/alerting infrastructure (to detect anomalies or gaps in logging). These operational costs can add 20-30% to the direct infrastructure expenses.

For AI product companies, understanding this cost structure is critical for several pricing decisions. First, it informs the threshold at which bundled pricing becomes more profitable than consumption-based pricing (typically when storage and retention costs exceed the overhead of managing metered billing). Second, it reveals opportunities for tiered pricing based on retention periods, with shorter retention (30-90 days) priced significantly lower than long-term retention (3-5 years). Third, it highlights the importance of cost optimization features—like automated lifecycle policies, compression, and archival tier migration—that can reduce infrastructure costs by 40-60% and should be reflected in pricing models.

The economics also suggest that governance features may have different unit economics than core AI inference. While inference costs scale primarily with usage volume, governance costs have significant fixed components (storage infrastructure, retention management, compliance tooling) that create economies of scale. This implies that pricing models should potentially separate governance fees from inference fees, with governance priced to recover fixed costs plus a margin on variable costs, rather than simply adding a percentage markup to inference pricing.

Packaging Strategies: Bundled vs. Unbundled Governance Features

The strategic decision between bundling governance features with core AI products or offering them as separate, unbundled options has profound implications for pricing, market positioning, and customer acquisition across different segments. Analysis of successful implementations and market data reveals that the optimal approach varies based on target customer profile, competitive dynamics, and the strategic role of governance in your value proposition.

The bundled approach, exemplified by major platforms like Azure and AWS, integrates audit trails and model logging into higher-tier packages without exposing separate pricing for governance capabilities. This strategy offers several advantages: it simplifies the buying decision by presenting governance as an included benefit rather than an additional cost consideration, reduces billing complexity, and positions governance as a value-add that justifies premium pricing for enterprise tiers. According to market analysis, enterprises operating with $1,240 per employee annual AI budgets and multi-million dollar technology investments prefer comprehensive packages that include governance rather than piecemeal purchases.

Bundling works particularly well when governance features are table-stakes requirements for your target market. For AI products serving regulated industries like financial services, healthcare, or government, comprehensive audit trails aren't optional—they're prerequisites for consideration. In these contexts, unbundling governance creates friction in the sales process and may signal that compliance is an afterthought rather than a core capability. Research shows that companies demonstrating SOC 2/ISO compliance achieve 34% higher revenue growth, suggesting that bundling compliance features into all tiers (or at least all enterprise tiers) can accelerate sales cycles and improve win rates.

However, bundling has significant drawbacks. It obscures the true value of governance features, making it difficult for customers to understand what they're paying for and potentially undervaluing capabilities that required substantial investment to develop. It also creates pricing rigidity: once governance is bundled, it's challenging to adjust pricing as infrastructure costs change or as you add more sophisticated governance capabilities. Additionally, bundling may price out cost-sensitive segments who need basic AI capabilities but don't require comprehensive governance, potentially limiting market reach.

The unbundled approach treats audit trails and model logging as separate SKUs with distinct pricing, either through add-on modules or consumption-based fees tied to logging volume. This strategy provides transparency that appeals to sophisticated buyers who want to optimize spending, enables tiered governance offerings that serve different compliance needs, and creates flexibility to adjust pricing as costs and capabilities evolve. Google Vertex AI's modular pricing exemplifies this approach, with separate charges for monitoring, explainability, and logging services.

Unbundling excels when serving diverse market segments with varying governance needs. SMBs adopting AI may need basic logging for operational troubleshooting but not comprehensive compliance-grade audit trails, while enterprises in highly regulated industries require extensive logging with long retention periods. Offering governance as optional add-ons (e