How to package human review credits in AI products

The convergence of artificial intelligence and human expertise represents one of the most complex monetization challenges facing modern AI product leaders. As AI systems become increasingly sophisticated, the strategic integration of human review—whether for quality assurance, edge case handling, or regulatory compliance—creates unique pricing considerations that traditional SaaS models simply cannot address. The question isn't whether to include human review in your AI product, but rather how to package and price this hybrid capability in a way that aligns costs with customer value while maintaining sustainable margins.

Human review credits have emerged as a critical component of AI product packaging, particularly in domains where accuracy, compliance, and trust are non-negotiable. According to research from Monetizely's pricing analysis, companies implementing systematic human-in-the-loop (HITL) pricing approaches have achieved revenue increases of 12-40% year-over-year. Yet despite this potential, many organizations struggle to structure these offerings effectively, often defaulting to cost-plus methodologies that leave significant value on the table or create misaligned incentives between provider and customer.

The fundamental challenge lies in reconciling two fundamentally different cost structures: the variable, often volatile costs of AI infrastructure with the more predictable but labor-intensive costs of human review operations. This article provides a comprehensive framework for packaging human review credits in AI products, drawing on real-world implementations, market data, and strategic pricing principles to help product leaders, pricing strategists, and executives navigate this complex landscape.

Understanding the Economics of Human-AI Hybrid Services

Before designing a packaging strategy, it's essential to understand the underlying cost dynamics that make human-AI hybrid services fundamentally different from traditional software offerings. The economics of these systems involve multiple cost layers that behave differently as usage scales, creating both opportunities and constraints for pricing innovation.

The Cost Structure Reality

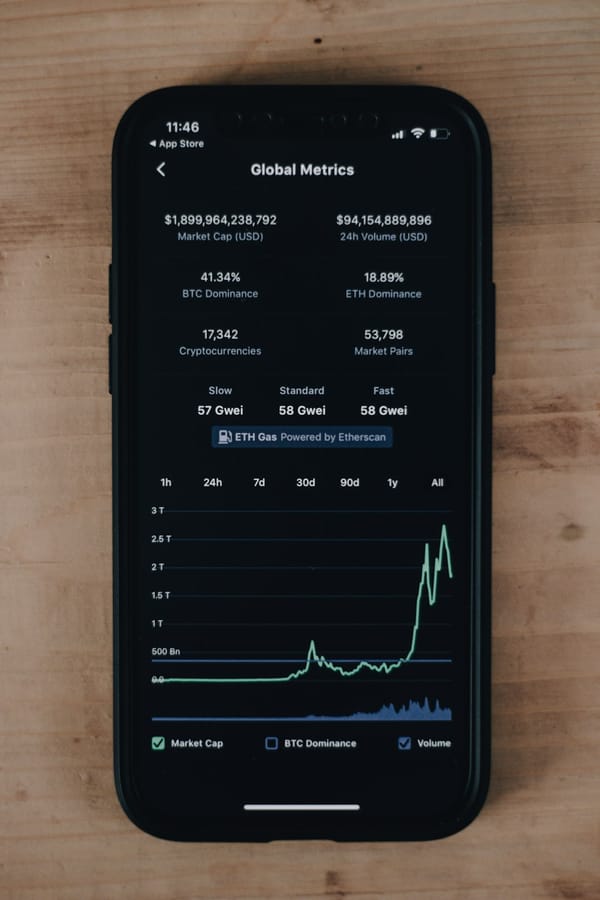

The AI-powered content moderation market, valued at $10.1 billion in 2025 and projected to reach $28.6 billion by 2034, provides valuable insights into hybrid service economics. Pure human review for expert verification typically costs approximately $10 per unit for tasks requiring nuanced judgment such as brand safety classification or complex document verification. These human reviewers can process 4,500-5,000 items daily, achieving F1-scores of 0.95-0.98 for accuracy.

In contrast, AI models operate at dramatically different cost points. GPT-4o processes similar tasks at $0.83 per unit with an F1-score of 0.84, while more efficient models like Gemini-2.0-Flash cost $0.34 per unit (F1-score 0.88) and GPT-4o-mini drops to $0.05 per unit (F1-score 0.80). However, the real economic advantage emerges in hybrid configurations where AI handles approximately 90% of cases at the lower per-unit cost, escalating only 10% to human reviewers. This blended approach delivers 60-80% overall cost savings compared to pure human review while maintaining high accuracy standards.

The infrastructure costs for AI components remain volatile and vary significantly by model choice and task complexity. Foundation model costs have dropped over 90% in recent years, yet uncertainty persists about future pricing trajectories. This volatility creates a fundamental mismatch with the fixed labor costs associated with human review operations, making traditional flat-rate pricing models unsustainable for hybrid services.

Variable vs. Fixed Cost Dynamics

Every token processed, API call executed, and model inference performed requires GPU compute resources. A customer using one million tokens might cost hundreds of dollars in infrastructure, while another using 1,000 tokens costs mere pennies. This extreme variability stands in stark contrast to human review operations, which typically involve fixed labor costs per reviewer regardless of volume fluctuations within normal operating ranges.

According to industry research, companies sticking with seat-only pricing for AI products experience 40% lower gross margins compared to those adopting hybrid pricing models. The automation factor inherent in AI reduces the number of human users required to operate software, making traditional per-seat pricing increasingly irrelevant. Conversely, human review operations still benefit from seat-based models for access and training cost recovery.

This cost structure complexity requires sophisticated packaging approaches that can accommodate both predictable baseline costs and variable consumption patterns while ensuring sustainable margins across different customer usage profiles.

Strategic Packaging Frameworks for Human Review Credits

Effective packaging of human review credits requires moving beyond simple bundling to create strategic tier structures that align with customer segments, use cases, and value realization patterns. The most successful implementations combine multiple packaging dimensions to create flexibility while maintaining pricing clarity.

Tiered Subscription with Allocated Credits

The tiered subscription model with allocated human review credits has emerged as one of the most widely adopted frameworks for hybrid AI services. This approach combines a base monthly subscription fee with a predetermined allocation of human review credits, creating predictable revenue streams while accommodating variable usage patterns.

A representative implementation for contract analysis AI might structure tiers as follows:

Basic Tier: $500/month with 20 human review credits

Professional Tier: $1,200/month with 100 human review credits

Enterprise Tier: $3,000/month with 300 human review credits

This structure works particularly well when human review represents a minority of total processing volume but serves critical quality assurance or compliance functions. Customers can purchase additional credits beyond their allocation, creating expansion revenue opportunities while the base subscription covers platform access, AI processing, and a baseline level of human verification.

The key to success with this model lies in carefully calibrating the credit allocation at each tier. Too few credits and customers constantly exceed their allocation, creating friction and potential churn. Too many credits and you leave revenue on the table while potentially encouraging inefficient usage patterns. According to research on HITL pricing strategies, the optimal approach involves analyzing customer usage patterns across segments to identify natural clustering points where credit allocations align with typical consumption.

Usage-Based Credits with Minimum Commitments

For customers with highly variable or unpredictable human review needs, a pure usage-based credit system with minimum monthly commitments can provide greater flexibility while protecting baseline revenue. This model charges customers only for the human review credits they actually consume, but requires a minimum monthly commitment to ensure revenue stability.

A document processing platform might implement this as a $1,000 monthly minimum commitment with credits priced at $5 per human review. Customers who use fewer than 200 reviews per month pay the minimum; those exceeding this threshold pay only for actual consumption. This approach aligns particularly well with enterprise customers experiencing seasonal fluctuations or project-based workloads where human review volume varies significantly month-to-month.

The minimum commitment serves multiple strategic purposes beyond revenue protection. It creates a psychological anchor that encourages consistent platform usage, helps customers justify the investment through regular engagement, and provides predictable cash flow for capacity planning. Research from pricing strategy firms indicates that minimum commitments reduce churn by 23% compared to pure pay-as-you-go models, as customers develop habitual usage patterns around their baseline commitment.

Outcome-Based Packaging

Outcome-based pricing represents a more sophisticated approach that ties human review credits to business results rather than activity metrics. Instead of charging per review or per credit, this model prices based on successfully completed outcomes that required human verification.

Zendesk exemplifies this approach in their customer service AI implementation, charging $0.99 per AI-resolved conversation while maintaining traditional per-seat pricing ($19-$115/seat/month) for human agent access. The outcome-based component inherently includes whatever human verification occurred to ensure resolution quality, without exposing customers to the complexity of understanding when AI versus human effort was applied.

This packaging approach proves particularly effective when customers care primarily about results rather than process. A medical coding AI might charge per correctly coded patient record regardless of whether AI handled it autonomously or required human review for edge cases. According to Deloitte's AI Business Case Builder, outcome-based pricing increases adoption rates by 35% compared to technology-based pricing for hybrid AI systems, as it shifts risk from the customer to the provider and aligns payment with value realization.

The challenge with outcome-based packaging lies in defining clear success criteria and establishing verification mechanisms that both parties trust. What constitutes a "successful" outcome must be measurable, attributable to the service, and valuable enough to justify the pricing. Additionally, providers must carefully model their cost-to-serve across different outcome scenarios to ensure margin sustainability as AI efficiency improves over time.

Designing Credit Consumption Models

Beyond the overall packaging structure, the specific mechanics of how credits are consumed, replenished, and carried over significantly impact customer experience and revenue realization. These operational details often receive insufficient attention during pricing design, yet they fundamentally shape how customers perceive value and engage with the product.

Credit Valuation and Granularity

Determining the appropriate credit valuation—how much human review activity a single credit represents—requires balancing simplicity with precision. Overly granular credit systems (e.g., 0.1 credits for a basic review, 2.7 credits for complex verification) create cognitive overhead and make it difficult for customers to predict consumption. Overly coarse systems (e.g., one credit for any review regardless of complexity) create perceived unfairness when simple and complex reviews consume identical credits.

The most effective implementations establish 3-5 credit tiers based on review complexity and effort required:

Basic Review (1 credit): Simple yes/no verification, factual accuracy checks, standard compliance validation

Standard Review (3 credits): Nuanced judgment calls, context-dependent decisions, moderate expertise required

Expert Review (5 credits): Domain specialist verification, complex regulatory interpretation, high-stakes decisions

Premium Review (10 credits): Multi-expert consensus, detailed analysis with documentation, specialized certifications required

This tiered approach maintains simplicity while acknowledging that not all human reviews require equivalent effort or expertise. Customers can understand and predict their consumption patterns, while providers can ensure margin sustainability across different review types.

Rollover Policies and Expiration

Credit rollover policies significantly impact customer psychology and usage patterns. Three primary approaches dominate the market, each with distinct strategic implications:

No Rollover (Use-It-or-Lose-It): Unused credits expire at the end of each billing period. This approach maximizes short-term revenue and encourages consistent usage, but can create customer frustration and perception of unfairness, particularly for customers with variable monthly needs. Research indicates this model works best when combined with lower base pricing and easy credit purchases, positioning unused credits as acceptable waste given the overall value proposition.

Limited Rollover: Credits roll over for one or two billing periods before expiring. This balanced approach addresses customer concerns about occasional low-usage months while preventing indefinite credit accumulation that could reduce future revenue. A common implementation allows credits to roll over once, so credits purchased in January can be used through March but expire if unused by then. This policy encourages regular engagement while providing flexibility for natural usage variability.

Unlimited Rollover with Annual True-Up: All credits roll over indefinitely within an annual contract period, with a year-end reconciliation. This enterprise-friendly approach works well for large customers with project-based or seasonal usage patterns. The annual true-up ensures customers eventually consume or forfeit their credits, while the extended timeframe accommodates legitimate business cycle variations.

According to research on subscription pricing mechanics, limited rollover policies achieve the optimal balance between customer satisfaction and revenue protection, reducing complaints by 47% compared to no-rollover approaches while maintaining 89% of the revenue capture.

Credit Pooling and Allocation

For enterprise customers with multiple teams, departments, or business units using the platform, credit pooling and allocation capabilities become essential packaging features. Two primary models address this need:

Centralized Pool: All credits exist in a single organizational pool that any authorized user can draw from. This simple approach works well for smaller organizations or those with centralized governance, but can create internal allocation challenges and potential conflicts when multiple teams compete for limited credits.

Allocated Budgets: Credits are distributed to specific teams or departments at the start of each period, with optional reallocation capabilities. This approach provides cost center accountability and prevents any single team from consuming the entire organization's credit allocation. Advanced implementations allow administrators to set usage alerts, establish approval workflows for credit transfers between teams, and generate detailed consumption reports by department.

The strategic choice between these models depends on customer organizational structure and governance preferences. Enterprise packages often include both options, allowing customers to configure credit management according to their internal processes. This flexibility becomes a differentiating feature when competing for large accounts with complex organizational structures.

Pricing Human Review Credits: Finding the Right Price Points

Setting the actual price per credit or per review tier requires sophisticated analysis that balances cost recovery, competitive positioning, and value capture. Unlike pure software pricing where marginal costs approach zero, human review operations involve real labor costs that must be recovered while maintaining attractive margins.

Cost-Plus Foundations with Value Multipliers

The starting point for credit pricing involves understanding your fully-loaded cost per review type. This includes direct labor costs (reviewer wages and benefits), indirect costs (quality assurance, training, management overhead), platform costs (tools, infrastructure, support systems), and margin requirements. For a standard human review requiring 5-7 minutes of expert time, fully-loaded costs typically range from $3-8 depending on reviewer expertise level and geographic location.

However, pricing based solely on cost-plus methodology leaves significant value on the table and fails to account for the strategic importance of human review in the overall customer workflow. A more sophisticated approach applies value multipliers based on several factors:

Criticality Multiplier (1.5-3x): Reviews that prevent costly errors, ensure regulatory compliance, or protect brand reputation justify higher pricing based on the downside they prevent rather than just the effort required. A compliance review that prevents a $100,000 regulatory fine delivers far more value than its $5 cost would suggest.

Speed Multiplier (1.2-2x): Expedited reviews with guaranteed SLA commitments command premium pricing. Standard reviews might be completed within 24 hours, while premium credits guarantee 2-hour turnaround, justifying 1.5-2x pricing for the operational complexity of maintaining rapid-response capacity.

Expertise Multiplier (2-5x): Reviews requiring specialized domain knowledge, professional certifications, or rare skill sets justify premium pricing. A financial compliance review by a certified accountant should cost significantly more than a general content moderation review, reflecting both the higher labor costs and the specialized value delivered.

Competitive Benchmarking and Market Positioning

While cost-plus foundations ensure margin sustainability, competitive market analysis reveals what customers are willing to pay and how to position your offering. The hybrid AI service market shows significant pricing variation based on use case and value delivery:

Content Moderation: Pure AI services range from $0.05-0.83 per item, while hybrid services with human review typically charge $1.50-3.00 per reviewed item, representing a 3-6x premium over AI-only pricing.

Document Processing: AI extraction costs $0.10-0.50 per document, while human verification adds $2-5 per document depending on complexity and accuracy requirements.

Customer Service: AI-resolved conversations cost $0.50-1.50 per resolution, while human-escalated interactions cost $3-8 per resolution when including the human review component.

These benchmarks reveal that customers accept 3-10x premiums for human-verified outcomes compared to AI-only processing, provided the value proposition clearly articulates why human review matters for their specific use case. The key lies in positioning human review not as a necessary evil or cost center, but as a value-added service that delivers superior outcomes, risk mitigation, or compliance assurance.

Dynamic Pricing Considerations

More sophisticated implementations incorporate dynamic pricing elements that adjust credit costs based on demand, capacity, or customer commitment levels. Volume-based discounting represents the most common dynamic element, with marginal credit costs decreasing as customers commit to higher volumes:

1-100 credits/month: $8 per credit

101-500 credits/month: $6 per credit

501-2,000 credits/month: $4.50 per credit

2,001+ credits/month: $3.50 per credit (custom enterprise pricing)

This structure encourages larger commitments while ensuring smaller customers still have access to human review capabilities, albeit at higher per-unit costs that reflect the operational inefficiency of serving low-volume accounts.

Time-based dynamic pricing adjusts costs based on when reviews are requested. Standard queue reviews might cost $5 per credit with 24-hour SLA, while rush reviews requested during business hours cost $7.50 (1.5x multiplier) and after-hours or weekend rush reviews cost $10 (2x multiplier). This approach manages demand across time periods while capturing additional value from customers with urgent needs.

Integration with AI Product Pricing Architecture

Human review credits rarely exist in isolation; they must integrate coherently with the broader AI product pricing structure. The relationship between AI processing charges, platform access fees, and human review credits requires careful orchestration to create a unified pricing experience that customers can understand and predict.

Hybrid Pricing Model Integration

The most successful implementations combine multiple pricing dimensions into cohesive packages that address different cost drivers appropriately. A representative structure might include:

Platform Access Fee: $500-2,000/month based on tier, covering core platform features, user seats, basic support, and standard AI processing up to defined limits.

AI Consumption Credits: $0.10-0.50 per AI task beyond included allowances, charged for automated processing, inference calls, or model executions.

Human Review Credits: $3-10 per review based on complexity tier, charged for escalations requiring human verification or judgment.

This three-dimensional structure allows each component to scale appropriately with its underlying cost driver. Platform fees cover fixed costs and baseline capacity, AI credits align with variable infrastructure costs, and human review credits reflect labor-intensive verification work. According to research on hybrid pricing models, this approach protects providers from cost volatility while allowing them to share efficiency gains with customers as AI capabilities improve.

The critical integration point lies in the escalation mechanism—how and when the system transitions from AI processing to human review. Transparent escalation rules help customers predict costs and build trust in the hybrid system. Clear policies might specify:

- AI confidence scores below 85% automatically trigger human review

- Customer-flagged items always receive human verification

- Random sampling of 5% of AI decisions for quality assurance

- Regulatory or compliance-