AI pricing for hybrid cloud, sovereign cloud, and private cloud deployments

The enterprise AI landscape is undergoing a fundamental transformation in how organizations deploy and price intelligent systems. As data sovereignty concerns intensify, regulatory requirements tighten, and security demands escalate, the traditional public cloud AI pricing paradigm is giving way to a more nuanced ecosystem encompassing hybrid cloud, sovereign cloud, and private cloud deployments. This shift represents not merely a technical evolution but a strategic recalibration of how enterprises balance innovation velocity, regulatory compliance, cost predictability, and competitive advantage.

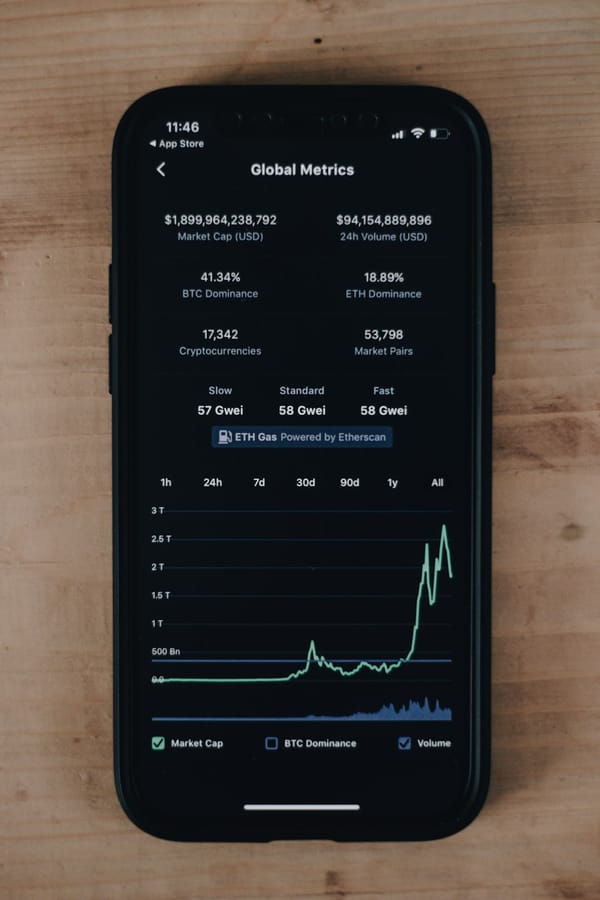

The stakes are substantial. According to CloudZero research, average monthly AI spending reached $85,521 in 2025, representing a 36% increase from 2024's $62,964. Yet this figure masks profound variations in deployment models, with private cloud implementations requiring $50,000-$500,000 in upfront infrastructure costs while offering potential savings of 60-70% at high utilization rates. Meanwhile, the sovereign cloud market surged from $123-154 billion in 2025 toward a projected $823 billion by 2032, driven by geopolitical tensions and regulatory mandates that fundamentally reshape where AI workloads can legally execute.

For pricing strategists, this fragmentation creates unprecedented complexity. Organizations must now architect pricing models that accommodate radically different cost structures, risk profiles, and value propositions across deployment environments. The pricing framework that works for consumption-based public cloud AI fails spectacularly when applied to capital-intensive private deployments or compliance-heavy sovereign infrastructures. Understanding these distinctions is no longer optional—it's essential for competitive survival in an increasingly regulated, geopolitically fractured AI marketplace.

Why Deployment Environment Fundamentally Alters AI Pricing Economics

The deployment environment for AI systems creates distinct economic profiles that directly impact pricing model viability. Unlike traditional software where deployment location primarily affects latency and availability, AI deployment environments fundamentally alter cost structures, risk allocation, and value delivery mechanisms.

Public cloud AI deployments operate on marginal cost economics where every inference incurs compute charges. Major hyperscalers like Microsoft Azure OpenAI charge $5 per million input tokens and $15 per million output tokens for GPT-4o, with costs varying dramatically based on model selection. This creates inherent volatility—65% of organizations using consumption-based pricing report unexpected charges, with budget variance ranging ±30-50% according to recent adoption studies. The pay-as-you-go model transfers infrastructure risk to the provider but creates revenue unpredictability for AI vendors and cost uncertainty for enterprise buyers.

Private cloud deployments invert this economic model entirely. Organizations invest $50,000-$500,000 upfront in dedicated GPU infrastructure (4x A100 80GB or 2x H100 GPUs for medium implementations, scaling to 8x H100 clusters for enterprise deployments supporting 1,000+ users), then incur ongoing operational costs of $2,000-$30,000 monthly for compute, $500-$5,000 for storage, and approximately $40,000 annually for power and maintenance. This capital-intensive approach becomes economically favorable at 60-70% utilization rates compared to public cloud equivalents, according to Deloitte analysis. Per-token costs can drop to $0.11 per million output tokens—18 times cheaper than public cloud alternatives—but require sustained high utilization to justify the initial investment.

Hybrid cloud architectures blend these models, running sensitive or high-volume workloads on-premises while leveraging public cloud for burst capacity, specialized models, or development environments. This creates pricing complexity as organizations must account for both capital expenditure amortization and variable consumption charges. Hybrid pricing models—combining subscription fees with usage-based components—now dominate AI vendor strategies, with 49% adoption among AI providers as of 2025. These models balance seller margin requirements (targeting 60-70% post-AI costs) with buyer needs for predictability, using base fees to cover fixed infrastructure costs and variable charges for actual usage.

Sovereign cloud deployments add regulatory compliance premiums of 10-30% over standard public cloud pricing due to isolated infrastructure, screened personnel, and enhanced audit capabilities. Google's Sovereign Cloud commands 10-20% premiums, Oracle EU Sovereign Cloud 15-30%, and AWS GovCloud 20-30% over comparable public cloud services. However, government subsidies and strategic discounts often reduce effective costs, while the value proposition extends beyond pure economics to encompass regulatory compliance, geopolitical risk mitigation, and data sovereignty assurance—factors that traditional ROI calculations struggle to capture.

The pricing implication is clear: deployment environment selection is not a technical decision to be made after pricing strategy is set, but a fundamental input that determines which pricing models are economically viable and strategically aligned with customer value perception.

The Hybrid Cloud Pricing Challenge: Balancing Flexibility and Predictability

Hybrid cloud AI deployments present unique pricing challenges because they combine fundamentally different economic models within a single customer relationship. Organizations adopting hybrid approaches typically run baseline workloads on private infrastructure while using public cloud resources for peak demand, specialized capabilities, or geographic expansion. This operational model requires pricing structures that accommodate both capital-intensive on-premises investments and variable cloud consumption.

According to Foundry's 2025 survey of 300+ enterprise decision-makers, AI and hybrid IT are fundamentally reshaping infrastructure strategies. Organizations increasingly demand deployment flexibility that allows workloads to move between environments based on performance requirements, regulatory constraints, and cost optimization opportunities. This technical flexibility must be matched by pricing flexibility that doesn't penalize customers for architectural sophistication.

The subscription-plus-usage hybrid model has emerged as the dominant approach, adopted by 49% of AI vendors as of 2025. This framework typically includes a base subscription fee covering platform access, governance tools, and a committed usage allocation, combined with consumption-based charges for usage exceeding baseline commitments. Salesforce's AI suite exemplifies this approach with per-digital-agent fees plus interaction-based charges, while OpenAI's enterprise platform combines platform access fees with token-based usage pricing.

The economic rationale is compelling for both vendors and customers. Vendors secure predictable baseline revenue to cover fixed infrastructure costs while maintaining margin protection through usage-based components that reflect actual AI compute expenses. Customers gain budget predictability through the subscription component while retaining the flexibility to scale usage without renegotiating contracts. The model addresses the fundamental challenge of AI economics: the reintroduction of significant marginal costs (GPU compute, API calls to foundation models) that traditional SaaS subscription models were designed to eliminate.

However, implementation complexity is substantial. Organizations must establish clear delineation between on-premises and cloud-consumed resources, implement robust metering across hybrid environments, and create transparent cost allocation mechanisms that customers can understand and validate. According to research on hybrid pricing implementation, effective models require:

Clear baseline definitions that specify what infrastructure, capacity, or usage volume the subscription component covers. Ambiguity here creates billing disputes and customer dissatisfaction. For hybrid deployments, this might include dedicated on-premises capacity plus a committed cloud usage allocation.

Transparent overage pricing that customers can predict and budget for. The most successful implementations provide usage dashboards, predictive alerts when approaching thresholds, and graduated overage rates that reward increased consumption rather than penalizing it. Azure AI Gateway pricing, for instance, starts at approximately $147 monthly for standard tiers but scales significantly for enterprise environments requiring sophisticated governance and routing capabilities.

Deployment-appropriate metrics that align with how customers actually consume value across hybrid environments. Token-based pricing works well for cloud API calls but may be inappropriate for on-premises deployments where customers have already invested in infrastructure. Alternative metrics might include active users, processed transactions, or business outcomes achieved.

Commitment flexibility that accommodates workload migration between environments. As organizations optimize hybrid deployments, workload placement changes. Pricing models that lock customers into rigid environment-specific commitments create friction and reduce the strategic value of hybrid architectures. More sophisticated approaches provide pooled commitments that can be consumed across deployment environments, potentially with environment-specific conversion rates reflecting underlying cost differences.

The pricing strategy must also address the temporal dimension of hybrid deployments. Many organizations begin with public cloud AI, then migrate high-volume workloads to private infrastructure as utilization justifies capital investment. According to analysis of on-premise AI economics, this migration becomes favorable when utilization reaches 60-70% of equivalent cloud costs. Pricing models should facilitate rather than penalize this evolution, potentially offering migration credits, commitment portability, or graduated pricing that rewards increased total consumption regardless of deployment location.

For organizations selling AI capabilities in hybrid environments, the strategic imperative is creating pricing architectures that are deployment-agnostic from the customer's perspective while accurately reflecting underlying cost structures. This might involve unified pricing that abstracts deployment complexity, or transparent multi-tier pricing that helps customers optimize their own deployment decisions. The comparative economics of cloud versus on-premise AI deployments provide essential context for these pricing decisions, particularly regarding break-even analysis and total cost of ownership calculations that inform customer deployment choices.

Sovereign Cloud AI: Pricing for Compliance, Control, and Geopolitical Risk

Sovereign cloud represents perhaps the most complex pricing environment in the AI ecosystem because it explicitly monetizes attributes—data residency, regulatory compliance, geopolitical independence—that resist traditional value quantification. Organizations don't choose sovereign cloud deployments primarily for technical superiority or cost efficiency; they choose them because regulatory requirements, competitive positioning, or risk management imperatives make alternatives untenable.

This fundamentally alters pricing dynamics. Sovereign cloud AI pricing typically carries 10-30% premiums over comparable public cloud services, yet demand is surging. The sovereign cloud market grew from $123-154 billion in 2025 toward a projected $823 billion by 2032, with Gartner predicting 65% of governments will introduce technological sovereignty requirements by 2028. According to IDC data, 63% of organizations are now more likely to adopt sovereign cloud services specifically due to recent geopolitical events, with three-fourths of business leaders expressing concern about storing data in global cloud environments.

Regulatory compliance forms the primary value driver for sovereign cloud pricing. The EU's Digital Services Act mandates data protection, localization, and transparency requirements that standard public cloud deployments struggle to satisfy. GDPR tensions with the US CLOUD Act create legal uncertainty that sovereign clouds explicitly resolve through jurisdictional isolation. Australia, India, and Middle Eastern nations expanded AI data residency rules throughout 2024-2025, creating region-specific compliance requirements that global cloud providers cannot universally address.

For regulated industries—healthcare, financial services, government, defense—sovereign cloud isn't a premium option but a compliance necessity. Healthcare organizations must satisfy FDA approval requirements for AI medical devices alongside HIPAA compliance. Financial institutions must balance anti-discrimination laws with algorithmic lending decisions while maintaining audit trails that satisfy multiple regulatory jurisdictions. Government contractors face security clearance requirements and export controls that restrict deployment options to certified sovereign environments.

The pricing challenge is that compliance costs are real and substantial, yet customers resist explicit "compliance surcharges" that feel like penalties for regulatory requirements beyond their control. More sophisticated pricing approaches integrate compliance value into broader value propositions:

Predictable pricing structures that eliminate the volatility inherent in multi-jurisdictional public cloud deployments. Sovereign clouds like those offered by NexGen Cloud emphasize predictable pricing alongside data localization and legal safety—critical for AI companies in regions with high tariffs or regulatory uncertainty. This predictability itself carries value for CFOs managing AI budgets that have proven notoriously difficult to forecast.

Risk-adjusted pricing that explicitly accounts for geopolitical and regulatory risk mitigation. Rather than framing sovereign cloud as "more expensive," effective pricing positions it as "risk-adjusted" or "compliance-inclusive," bundling regulatory assurance, audit support, and legal certainty into the base offering. This reframes the price premium as insurance against regulatory penalties, data breach liability, and geopolitical disruption.

Government subsidies and incentives that reduce effective customer costs. Governments provide subsidies and tax breaks to offset 15-30% premiums and encourage private sector adoption of sovereign infrastructure. AWS's €7.8 billion European Sovereign Cloud investment in Germany (launched late 2025) and Microsoft's air-gapped Sovereign Private Cloud in France and Germany (June 2025) both benefit from regional incentives designed to build strategic AI capabilities within European jurisdictions.

Tiered sovereignty offerings that allow customers to select appropriate compliance levels. Not all workloads require maximum sovereignty—some need data residency, others require operational sovereignty, and the most sensitive demand complete jurisdictional isolation. Oracle's EU Sovereign Cloud, for instance, offers different tiers with 15-30% premiums depending on isolation requirements, allowing customers to optimize costs by deploying only truly sensitive workloads in the highest-cost tiers.

The strategic pricing question for sovereign cloud providers is whether to position sovereignty as a premium feature commanding price premiums, or as a standard capability included in base pricing to maximize market penetration. AWS GovCloud and Azure Government historically commanded 20-30% premiums for US government workloads, but as sovereignty becomes mainstream rather than niche, competitive dynamics may compress these premiums. Google's NATO AI contract (November 2025) and the proliferation of sovereign cloud options suggest that sovereignty is transitioning from premium differentiator to table-stakes requirement in regulated markets.

For AI vendors pricing solutions deployed in sovereign environments, the critical consideration is whether to offer sovereign-specific SKUs with distinct pricing, or maintain pricing parity across deployment environments while adjusting commercial terms (committed usage, contract duration, support levels) to reflect the higher costs of sovereign infrastructure. The former provides pricing transparency but may create customer resistance to "sovereignty taxes," while the latter maintains pricing simplicity but requires careful margin management across deployment types.

According to CSIS analysis, achieving sovereign priorities while minimizing costs requires policy coordination, infrastructure sharing, and realistic assessment of which workloads genuinely require sovereign deployment. The pricing implication is that vendors should help customers make informed sovereignty decisions rather than universally recommending sovereign deployments that may be unnecessarily expensive for workloads without genuine compliance requirements.

Private Cloud Economics: Capital Intensity and the 60% Utilization Threshold

Private cloud AI deployments represent the most capital-intensive deployment model, requiring organizations to make substantial upfront infrastructure investments in exchange for long-term operational cost advantages. This economic profile creates unique pricing challenges because the vendor's cost structure (if providing managed private cloud) or the customer's cost structure (if self-deploying) differs fundamentally from consumption-based public cloud models.

The economics are well-documented. Private AI deployment requires $50,000-$500,000 in upfront infrastructure costs for medium-to-enterprise scale implementations, with ongoing monthly operations of $2,000-$30,000. A typical medium enterprise setup includes 4x A100 80GB or 2x H100 GPUs ($60,000-$120,000), supporting 70B parameter models and multiple concurrent users. Enterprise deployments serving 1,000+ users require 8x H100 or larger GPU clusters ($200,000-$500,000), plus networking and storage infrastructure ($50,000-$150,000), data center modifications ($25,000-$100,000), and software licensing ($20,000-$100,000).

The critical economic threshold is 60-70% utilization, the point at which on-premise deployment becomes economically favorable compared to public cloud equivalents according to Deloitte analysis. Below this threshold, the capital intensity of private deployment creates higher total cost of ownership; above it, the elimination of per-token charges and infrastructure sharing creates substantial savings. Lenovo's 2026 analysis of generative AI total cost of ownership confirms that on-premise TCO is lowest for high-utilization serving workloads, with per-token costs dropping to $0.11 per million output tokens—18 times cheaper than public cloud alternatives.

This creates a fundamental pricing challenge: how should vendors price AI solutions when customers make the deployment decision based on utilization economics rather than vendor pricing? Several approaches have emerged:

Capacity-based pricing that charges for deployed infrastructure rather than actual usage. This aligns with the economics of private deployment where costs are predominantly fixed once infrastructure is deployed. Pricing might be based on GPU count, total VRAM capacity, or maximum concurrent users supported. This approach provides predictable costs for customers and vendors alike, but may feel disconnected from value delivery if utilization varies significantly.

Subscription pricing with capacity tiers that bundles software licensing, support, and updates into annual fees scaled to deployment size. This is common for AI platforms deployed on customer-owned infrastructure, where the vendor provides software but not hardware. Pricing tiers might correspond to small (4-8 GPUs), medium (8-16 GPUs), and enterprise (16+ GPUs) deployments, with annual subscription fees ranging from $20,000-$100,000+ depending on scale and support levels.

Outcome-based pricing that ties costs to business results rather than infrastructure or usage metrics. This approach works particularly well for private deployments in regulated industries where the value proposition centers on compliance assurance, risk mitigation, and competitive advantage rather than marginal cost savings. Healthcare implementations might price based on patient interactions handled, financial services based on fraud cases prevented, or manufacturing based on quality defects identified.

Hybrid private-public pricing that combines private deployment for baseline workloads with public cloud bursting for peak demand. This requires careful metering and cost allocation across environments, but allows customers to optimize infrastructure utilization while maintaining deployment flexibility. Pricing might include a base fee for private deployment management plus consumption charges for public cloud overflow, with total costs designed to remain below pure public cloud alternatives at typical utilization levels.

The private cloud market is substantial and growing. Valued at $114.4 billion in 2024 and projected to reach $195 billion by 2030, the market is led by IBM, Microsoft, AWS, Dell, VMware, HPE, and Oracle, all offering hybrid solutions with AI integration and managed services. This growth reflects enterprise recognition that private deployment offers compelling economics for sustained, high-volume AI workloads, particularly those involving proprietary data or regulatory compliance requirements.

For pricing strategists, the critical insight is that private cloud AI pricing must account for the temporal cost structure rather than just marginal costs. Year one total costs for a